Debugging in 2026: Tools & Techniques

M Chetmars

Author

Most modern systems do not fail loudly anymore.

They rarely crash in a way that leaves a clear trace behind. What they do instead is drift. Behaviour changes subtly. Performance erodes unevenly. Users notice something is off long before engineers can point to a concrete fault.

This quiet failure mode is not accidental. It is the natural outcome of how software is built in 2026.

The short answer

Debugging in 2026 is no longer about locating and fixing bugs.

It is about understanding why complex systems behave the way they do — often without ever producing a clear error.

If debugging still begins with hunting for a broken line of code, it usually ends too late.

How debugging has actually changed

Then | Now |

Failures were explicit | Failures are gradual and behavioural |

Errors explained what went wrong | Signals hint at what might be wrong |

Logs were the primary source of truth | Context is spread across many weak signals |

Local reproduction was expected | Production is often the only real environment |

Debugging followed failure | Debugging shapes design decisions |

This shift is easy to underestimate because, on the surface, the tools look familiar. Underneath, the mental model has changed entirely.

Debugging without a clear failure

One of the most uncomfortable realities for experienced engineers today is that many debugging sessions begin without a clear starting point. Nothing is obviously broken. There is no smoking gun. The system is still running, responding, and technically doing what it was designed to do.

And yet, it no longer behaves the way the team expects.

Latency increases only during specific traffic patterns. Certain users experience inconsistent outcomes. Costs rise slowly, without any single deployment to blame. These are not edge cases — they are the dominant failure modes of large, distributed systems.

In this context, the idea of a “bug” becomes less useful. Bugs imply something discrete and isolated. Modern failures rarely are. They emerge from interactions, timing, scale, and accumulated assumptions that no longer hold.

Debugging, then, stops being an act of correction and becomes an act of interpretation.

Why correctness is no longer enough

A system can be correct and still be unhealthy.

This is one of the hardest lessons for teams that grew up with deterministic architectures.

Every service might return valid responses. Error rates can remain within agreed thresholds. Automated tests continue to pass. From a traditional perspective, everything looks fine.

From a user’s perspective, it is not.

The gap between technical correctness and experiential quality is where most modern debugging lives. Understanding that gap requires more than reading logs or stepping through code. It requires seeing how behaviour changes across time, load, and context.

This is why debugging has moved away from the idea of “finding what’s wrong” and towards understanding why the system reacts the way it does under certain conditions.

The quiet decline of log-centric debugging

Logs still exist in 2026. They are still necessary.

They are just no longer sufficient.

In many production incidents, the logs do not reveal anything obviously incorrect. They show that events happened, requests were handled, and responses were returned. What they fail to capture is the shape of the system over time.

Logs answer what happened.

They rarely explain why the system behaved that way.

As systems grew more distributed and asynchronous, debugging required a different kind of visibility — one that could connect weak signals across services, timelines, and boundaries. This is where observability quietly replaced logging as the centre of gravity.

Observability does not promise certainty. It offers inference. It allows teams to form and test hypotheses about system behaviour without relying on a single authoritative source of truth.

Debugging, in this sense, becomes less about certainty and more about sense-making.

When reproduction stops being realistic

For years, reproduction was treated as a prerequisite for debugging. If an issue could not be reproduced locally, it was often dismissed as unfixable or not yet understood.

That assumption no longer holds.

Many failures in modern systems depend on conditions that only exist in production: real user behaviour, organic traffic spikes, data volume, timing, and third-party interactions. Reproducing these conditions locally is often impossible — and sometimes misleading.

As a result, debugging has shifted towards observing failures where they occur, rather than trying to recreate them elsewhere. This has changed how teams think about environments, safety mechanisms, and tooling.

The goal is not perfect reproduction.

The goal is sufficient clarity.

Read More: Marketing Trends 2026

Debugging systems you did not fully author

Another quiet change is the nature of the code itself. Engineers increasingly debug systems they did not fully write — and sometimes cannot fully explain.

AI-generated code, scaffolding tools, managed services, and deeply abstracted frameworks all introduce behaviour that is correct by construction but opaque by nature. Reading the code does not always clarify intent. In some cases, the code changes faster than humans can meaningfully reason about it.

This forces debugging to move up a level. Instead of asking how something is implemented, teams ask what it was supposed to achieve, under which constraints, and in which context.

When those assumptions are wrong, no debugger can compensate.

Debugging as an architectural property

By 2026, the most effective teams treat debugging not as a skill exercised under pressure, but as a property designed into the system.

They invest in clarity before incidents happen. They constrain complexity deliberately. They make system behaviour observable by default, not as an afterthought. When failures occur, they surface early and intelligibly.

This is not about having better engineers.

It is about designing systems that are easier to understand when they inevitably misbehave.

And this is where debugging in 2026 truly begins — long before anything breaks.

When observability becomes the primary debugging surface

By the time an engineer opens a debugger in 2026, most of the important information has already been lost. The interesting questions are rarely answered by inspecting a single process or stepping through a function. They live in the space between services, across time, and under specific conditions.

This is why observability has quietly replaced traditional debugging tools as the primary surface for understanding failures.

Not because observability tools are better debuggers, but because they reflect how modern systems actually fail.

What teams used to look for | What they need to see now |

Is there an error? | How does behaviour change over time? |

Which request failed? | Which patterns correlate with failure? |

What stack trace was produced? | What dependencies amplify small issues? |

Where did it break? | Under which conditions does it degrade? |

Observability does not tell you what is broken. It helps you form a theory about why the system behaves the way it does. Debugging then becomes the process of validating or disproving that theory.

Tools matter, but only after the mental model changes

There is a persistent temptation to treat debugging improvements as a tooling problem. New platforms promise better traces, smarter alerts, richer dashboards, or AI-powered explanations. These tools can be valuable, but they only work when the underlying mental model is already sound.

Without that shift, better tools often produce more noise, not more clarity.

Teams that struggle with debugging in 2026 usually do not lack tools. They lack alignment between what the system is designed to do and what engineers expect it to do under pressure. When those expectations are wrong, no dashboard can compensate.

This is why mature teams evaluate debugging tools based on failure modes, not features.

Tooling question | More useful reframing |

What does this tool offer? | Which failure modes does it make visible? |

How advanced is the UI? | Does it reduce ambiguity under stress? |

Does it integrate well? | Does it preserve context across boundaries? |

Can it detect anomalies? | Can it support hypothesis-driven debugging? |

The most effective debugging setups are often simpler than expected. They prioritise continuity of context over volume of data.

Debugging AI-generated systems requires restraint, not inspection

As AI-generated code becomes more common, many teams instinctively try to debug it the same way they debug human-written code. This rarely works.

The problem is not that AI-generated code is unreadable. The problem is that reading it does not explain its behaviour.

When code is generated based on prompts, constraints, and training data, its intent lives outside the code itself. Debugging then shifts away from inspection and towards validation:

Is the prompt still accurate?

Have the constraints changed?

Is the surrounding context stable?

Are we relying on assumptions the model no longer shares?

In this environment, restraint becomes a debugging skill. Instead of digging deeper into generated implementation details, effective teams place guardrails higher up the stack. They constrain inputs, monitor outputs, and focus on behaviour at the boundaries.

Traditional instinct | AI-era adjustment |

Read the code carefully | Validate intent and constraints |

Step through execution | Observe outcomes across scenarios |

Fix the implementation | Refine prompts and guardrails |

Trust local reasoning | Monitor systemic behaviour |

This does not make debugging easier. It makes it different — and more architectural.

Read More: Web Development Trends 2026

Preventive debugging starts long before incidents

One of the most underestimated shifts in 2026 is the rise of preventive debugging. Not in the sense of eliminating failures, but in making failures legible when they happen.

Preventive debugging is not a separate phase. It is the outcome of decisions made during design:

how components communicate

how much implicit coupling exists

which assumptions are enforced explicitly

and which signals are considered first-class

Systems designed without these considerations tend to fail opaquely. Systems designed with them tend to fail clearly.

Design choice | Debugging consequence |

Implicit coupling | Ambiguous failures |

Hidden retries | Misleading metrics |

Overloaded services | Unclear ownership |

Clear boundaries | Faster interpretation |

Explicit constraints | Predictable failure modes |

The goal is not to prevent every incident. It is to ensure that when incidents occur, the system explains itself as much as possible.

Distributed systems have changed, where debugging happens

In earlier architectures, debugging was something engineers did after something went wrong. In distributed systems, debugging increasingly happens during normal operation.

This is not because teams are constantly firefighting, but because understanding system behaviour requires observing it in motion. Many questions cannot be answered by snapshots or postmortems alone.

Modern debugging workflows include:

live inspection with strict safeguards

traffic shadowing and replay

progressive rollouts as diagnostic tools

and controlled experiments in production-like environments

This approach can feel uncomfortable to teams raised on strict separation between development and operations. Yet it reflects the reality that some truths only reveal themselves at scale.

The skill is not avoiding production debugging altogether.

The skill is doing it deliberately, safely, and with clear intent.

Why Australian teams feel this shift more sharply

For teams operating from Australia, these changes are often felt earlier and more intensely. Distance from major cloud regions, reliance on global services, and increasingly distributed teams amplify the cost of unclear system behaviour.

Latency, regional failover, and cross-timezone dependencies are not edge cases. They are everyday concerns. When debugging relies on assumptions that only hold in tightly coupled environments, those assumptions break quickly.

This is why architectural debuggability matters so much for Australia-based products. Clear signals, explicit boundaries, and behavioural understanding reduce the penalty of distance.

Local context does not change the principles of debugging in 2026.

It simply removes the illusion that complexity can be ignored.

Read More: The Best Websites a Programmer Should Visit

The Australian Penalty: Debugging Across Distance

For teams operating in Australia, the shift toward behavioural debugging is even more critical. Distance from major cloud regions and reliance on global, asynchronous services mean that latency and regional failover are not edge cases—they are constant variables.

In Australia, a "bug" is often just a physical reality of the network. When your debugging relies on assumptions that only hold in tightly coupled, low-latency environments, those assumptions break the moment they hit the Pacific crossing. Architectural debuggability—clear signals and explicit boundaries—is the only way to reduce the "penalty of distance."

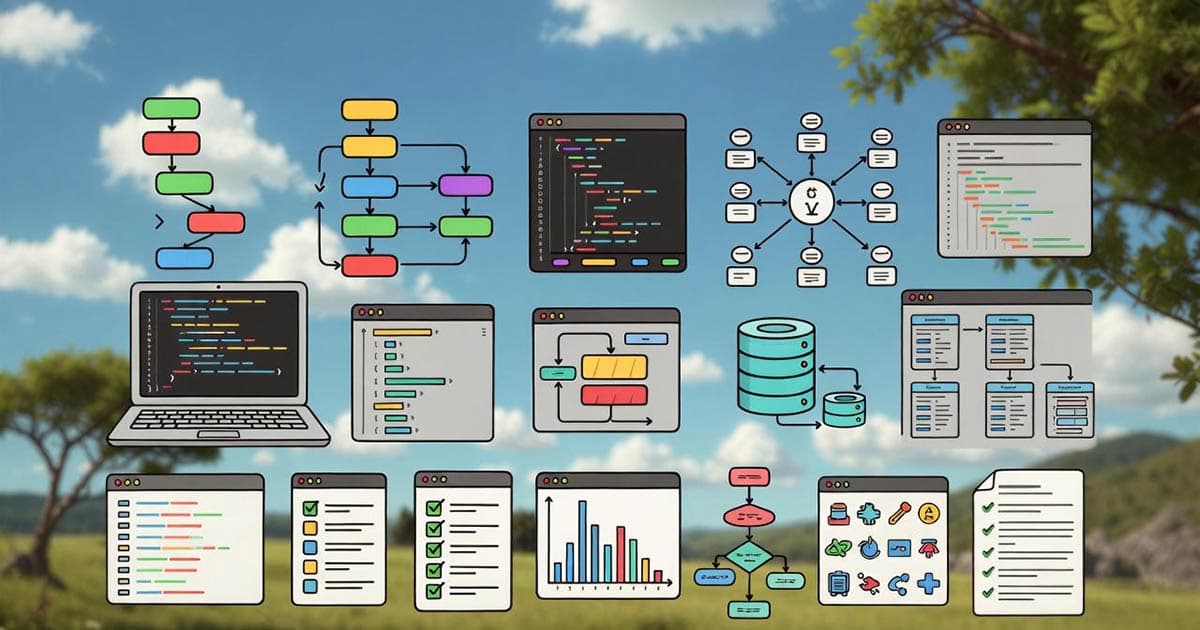

Debugging as a design discipline

In practice, this shift in debugging changes how teams build software long before anything goes wrong. Systems that are easier to understand under pressure tend to be simpler, clearer, and more deliberate by design. They rely less on heroic debugging efforts and more on architectures that surface their own behaviour.

This is especially visible in modern web and app development, where performance, reliability, and data flow are tightly coupled. When debugging is treated as a design concern rather than a reactive task, decisions around data modelling, service boundaries, and system visibility change noticeably. The result is software that not only runs, but explains itself when it doesn’t behave as expected.

For teams working with complex databases or data-heavy platforms, this mindset becomes even more critical. Debugging issues that span application logic, database behaviour, and analytical pipelines requires a shared understanding of how data moves, transforms, and accumulates meaning over time. Without that clarity, even well-built systems can become opaque surprisingly quickly.

At Flamincode, this perspective naturally shapes how we approach app and web development, database administration, and business intelligence projects, not as isolated services, but as parts of systems that need to remain understandable as they grow. Debugging, in that sense, is not a separate activity — it is a signal of whether the system was designed with clarity in mind.

Frequently Asked Questions

Why is debugging modern software systems becoming more difficult in 2026?

Because modern software systems are more distributed, dynamic, and data-driven than before. Many failures emerge from interactions between components rather than isolated bugs, making behaviour harder to trace to a single cause.

How is debugging different in distributed systems compared to traditional architectures?

In distributed systems, failures often depend on timing, load, and cross-service interactions that only occur in production. Debugging, therefore, shifts from local reproduction to observing patterns across services, environments, and time.

Can debugging really be designed into software architecture?

Yes. Architectural decisions such as clear service boundaries, explicit constraints, and meaningful observability signals directly affect how understandable a system is when it misbehaves. Systems designed with debuggability in mind tend to fail more transparently.

Why are logs no longer enough for effective debugging?

Logs capture individual events but rarely explain system behaviour over time. Modern debugging relies on correlating logs with metrics, traces, and contextual signals to understand why performance or reliability degrades under specific conditions.

How does AI-generated code change the way teams debug software?

AI-generated code often obscures intent, making line-by-line inspection less effective. Debugging shifts toward validating assumptions, constraints, and outcomes rather than focusing solely on implementation details.

Admin

Mostafa is a Wordsmith, storyteller, and language artisan weaving narratives and painting vivid imagery across digital landscapes with a spirited pen, he embraces the art of crafting compelling content as a copywriter, and content manager.

Your software dev partner, smooth process, exceptional results

Contacts

contact@flamincode.com.au

© All rights reserved to Flamincode