How Random Video Chat Platforms Are Built

M Chetmars

Author

As we saw people's interest in one of our blogposts about random video chat apps, as a web development company, we decided to go further in this article.

Random video chat platforms look simple on the surface.

A user opens the app, taps a button, and within seconds they are connected to someone new. If the conversation does not work, they skip and move on.

That experience feels lightweight. But the system behind it usually is not.

At Flamincode, we see this type of product as a strong example of how simple user experiences often depend on much deeper software architecture. A random video chat platform is not only a video feature. It is a real-time system that has to manage connection quality, user flow, session state, moderation, and scale all at once.

Short answer:

Random video chat platforms are usually built using WebRTC for live media, a signalling layer for connection setup, a matchmaking engine for pairing users, and backend systems that manage sessions, safety, and performance. The difficult part is not making video appear. The difficult part is making the whole experience stable, fast, and safe under real usage.

Core Layer | What It Does | Why It Matters |

WebRTC | Handles live audio and video streaming | Enables low-latency communication |

Signalling Server | Exchanges connection setup data | Allows sessions to begin |

Matchmaking Engine | Pairs users in real time | Creates the random experience |

STUN/TURN Infrastructure | Helps users connect across networks | Prevents failed calls |

Session Backend | Manages state, skips, and reconnects | Keeps the system consistent |

Moderation Systems | Handles abuse and reporting | Protects users and trust |

What Makes Random Video Chat Apps More Complex Than They Look

One of the biggest reasons these products get underestimated is that the interface looks small.

There is usually not much to see. A start action, a video feed, a skip option, and maybe a report button. Compared with a large SaaS dashboard or an eCommerce platform, it can seem like a much lighter product.

But almost none of the real complexity lives in the interface.

The complexity starts when the product promises a live interaction between two strangers who may be using different devices, browsers, network conditions, and locations. The system has to know who is available, who should be matched, how to connect them, and what to do when one side disconnects or skips.

And all of this has to happen quickly.

That is what makes random video chat platforms different from standard web applications. A delay that might feel acceptable in another product often feels broken here. A failed call is not a small bug. It interrupts the core value of the platform.

This is why products like this need to be treated as real-time communication systems, not only as front-end experiences.

The Core Architecture Behind a Random Video Chat Platform

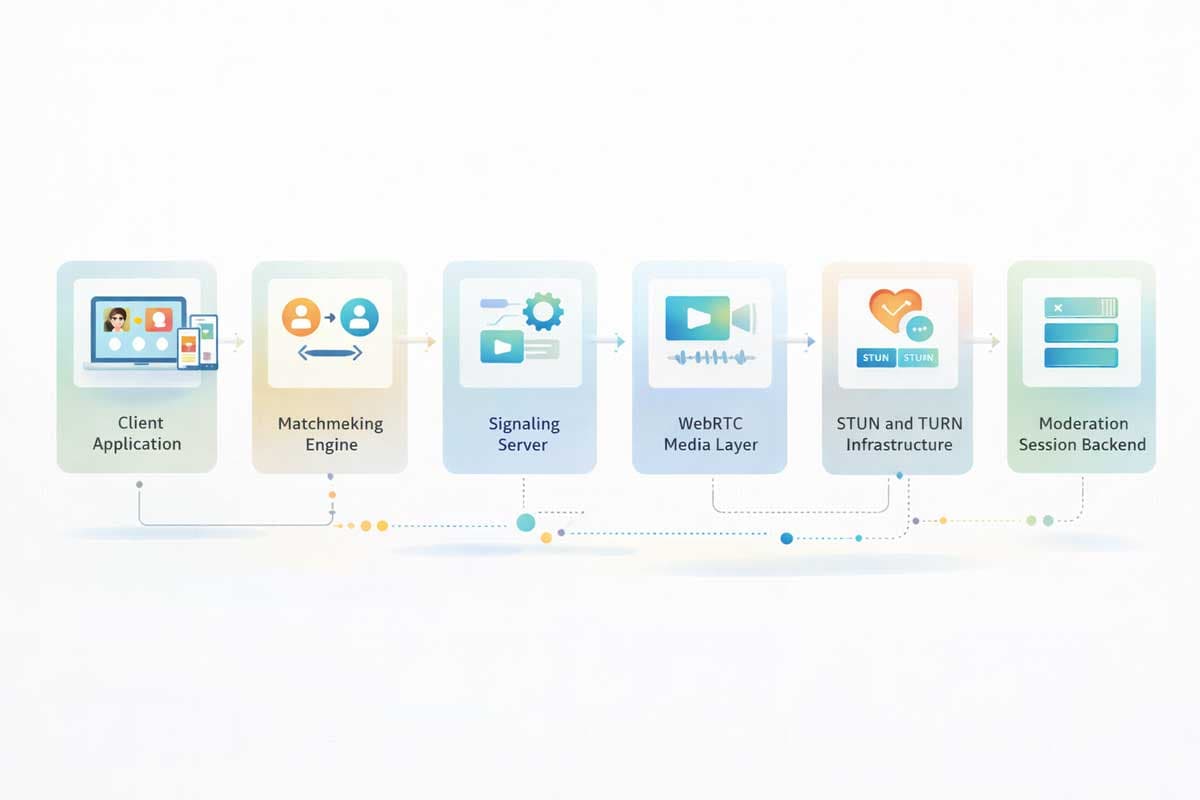

To understand how these platforms are built, it helps to think of them as several connected systems rather than one application.

The first layer is the client application. This is the part the user sees. It handles camera and microphone access, displays local and remote video, shows connection states, and lets the user start, skip, or end a conversation.

Behind that sits the matchmaking layer. This is where the product decides who should be paired with whom. It tracks who is online, who is waiting, and who is already in a session. If this part feels slow or inconsistent, the whole product feels weak.

Then comes the signalling layer. Before two people can speak, their devices need to exchange setup information. That connection setup usually happens through signalling, often using persistent real-time communication between the client and server.

The actual live media delivery usually relies on WebRTC. This is what handles real-time audio and video, keeps latency low, and helps the conversation feel natural rather than delayed or unstable.

At the same time, the product also needs a session backend. This layer keeps track of who is currently matched, who skipped, who disconnected unexpectedly, and what should happen next. That may sound simple, but in a live system this state changes constantly.

And finally, there is the moderation and safety layer. The moment strangers can connect anonymously, the platform becomes exposed to misuse. That means moderation is not something to “add later”. It is part of the architecture from the beginning.

Read More: Can AI Build a Business Website That Actually Sells?

Why These Platforms Feel Easy to Prototype but Hard to Productise

This is one of the most important distinctions in the whole build.

A rough prototype of a random video chat platform is not especially difficult to make. With a simple queue, a real-time communication layer, and a lightweight interface, a developer can get two users talking fairly quickly.

That often creates a false sense of completion.

Because a prototype only needs to prove that the concept works once. A real product needs to keep working under unpredictable behaviour.

Users deny permissions. They refresh unexpectedly. They switch networks. They disconnect halfway through a session. They reconnect in messy states. Some skip instantly. Some create usage patterns that break simple matching logic.

These are not edge cases in a live product. They are normal behaviour.

That is why a working demo and a production-ready platform are two very different things. The hidden engineering effort usually begins after the first successful call, not before it.

What the Product Is Really Selling

It is easy to think these platforms are selling video chat.

But video is only the transport layer.

What the product is really selling is instant connection with minimal friction. The value is not that a user can technically talk to someone. The value is that they can do it quickly, repeatedly, and with as little interruption as possible.

That changes how the product should be designed.

If matching is slow, the experience feels weak. If calls fail too often, trust drops. If moderation is poor, users leave. Small points of friction become much more visible in a product where the promise is speed and spontaneity.

That is why architecture matters so much here. The interface might look simple, but the experience depends on many invisible systems working together cleanly.

Read More: The Hidden Cost of Cheap Web Design in Australia

How Two Strangers Get Matched in Real Time

What users experience as “random” is usually not pure randomness. It is usually controlled matching.

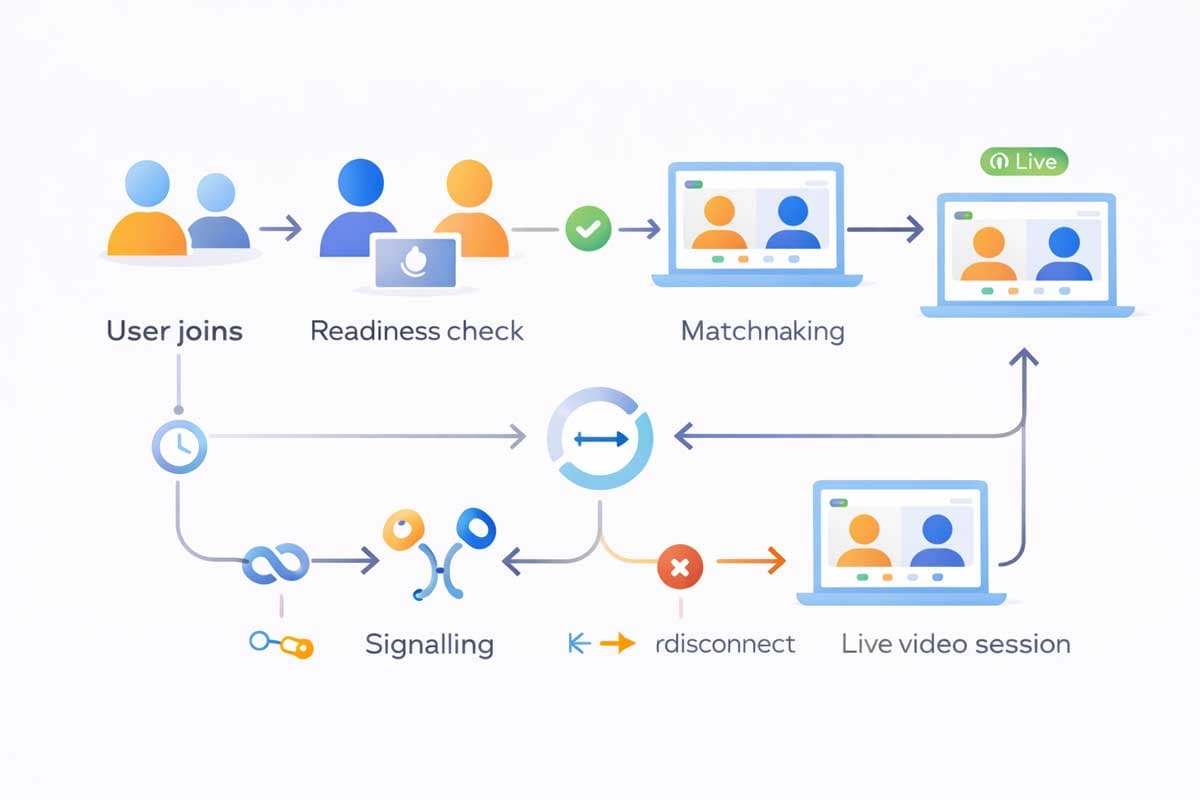

When someone presses start, the platform places them into an active waiting state. At that point, the system needs to know whether the user is genuinely ready to connect, whether their permissions are enabled, and whether they are already tied to a broken or unfinished session.

From there, the matching engine decides who should be paired next.

A simple version of the product might only look for another available user and create a session. That is enough for an MVP. But in real usage, user behaviour becomes far messier than that.

People skip instantly, disconnect halfway through, refresh unexpectedly, or reconnect after unstable internet. Some should not be matched with the same person again too quickly. Others may appear available while not truly being ready.

That is why the matching layer usually becomes more than a random queue. It becomes a real-time coordination system that manages availability, session state, and user flow between conversations.

And if this part feels slow or unstable, the entire product starts to feel broken.

How Video and Audio Are Delivered

Once two users are matched, the platform still needs to make a live conversation happen.

This is where many platforms rely on WebRTC, because it is built for low-latency real-time communication across browsers and devices. But WebRTC does not work in isolation.

Before audio and video can begin, the two sides need to exchange connection details through a signalling layer. This setup process allows both users to negotiate how the session will start.

After that, live media begins to flow.

The challenge is that users do not connect under ideal conditions. Some are on weak Wi-Fi. Some are on mobile data. Some are behind restrictive networks. That means the platform needs a reliable way to establish and maintain sessions across inconsistent environments.

This is why STUN and TURN infrastructure often becomes necessary. In simple terms, these services help devices find and reach each other when direct connection is difficult.

That is also one of the reasons random video chat products become more expensive at scale than they first appear.

Why Moderation Is One of the Hardest Parts

Moderation is one of the easiest things to underestimate in this kind of product.

The moment strangers can connect anonymously, the platform becomes exposed to misuse. And if that misuse is not controlled, trust disappears quickly.

At a minimum, the product needs reporting tools and some way to respond to harmful behaviour. But in most serious products, moderation has to go much further than that.

The system often needs to detect repeat offenders, apply restrictions, manage reports, and create enough friction to stop the same harmful behaviour from repeating too easily.

What makes this difficult is speed.

In many other products, moderation can happen after the fact. In random video chat, bad behaviour often happens in real time. That gives the platform far less room to react.

Which is why moderation is not a side feature. It is part of the product’s core architecture.

What Happens When the Platform Starts Scaling

A lot of random video chat products feel stable at low traffic.

Then usage grows, and the weak points begin to show.

Matching slows down. Sessions fail more often. Skip behaviour becomes chaotic. Reconnection states get messy. Media fallback becomes more common. Moderation load increases. Infrastructure cost starts rising faster than expected.

This happens because scale exposes problems that small testing never reveals.

At low concurrency, almost any system can feel acceptable. At higher concurrency, the platform needs to behave like a live operational system. The queue has to remain responsive. Session state has to stay clean. Connection setup has to keep working under pressure.

And the team needs enough visibility into the system to understand where failures are happening and why.

Without that, the product becomes difficult to stabilise.

Read More: Why Most Melbourne Business Websites Break at Scale

MVP vs Production-Ready Platform

One of the most useful distinctions in this type of build is the difference between an MVP and a production-ready platform.

An MVP is designed to prove the concept. It usually includes basic matching, live video, skip behaviour, and perhaps a lightweight reporting flow.

That is enough to test whether people want the product.

But a production-ready version needs much more than concept validation. It needs stronger stability, better session handling, deeper moderation, stronger recovery logic, and infrastructure that can tolerate real traffic.

Build Stage | What It Usually Includes | What It Usually Lacks |

MVP | Basic matching, video session, skip, simple report flow | Stability, moderation depth, scale readiness |

Growth Stage | Better queue handling, analytics, reconnection logic | Full operational resilience |

Production Platform | Scalable infrastructure, moderation systems, observability | Higher delivery and infrastructure complexity |

This distinction matters because many teams budget for an MVP and accidentally expect production-level behaviour from it.

That is usually where the trouble starts.

Should You Build It From Scratch or Use Existing Infrastructure?

This is one of the most practical questions to ask early.

In many cases, it does not make sense to build every technical layer from scratch. The smarter decision is often to decide which part of the product is truly unique, and which parts are infrastructure problems that already have reliable solutions.

For most products in this space, the competitive edge is not “we built video transport ourselves”. The real value usually comes from the matching experience, the niche use case, the moderation design, the retention model, or the overall product flow.

That means many teams are better off using existing communication infrastructure while focusing custom engineering effort on the layers that make the product commercially valuable.

This is often where a stronger technical strategy saves time, budget, and future rework.

Final Thoughts

Random video chat platforms look lightweight on the surface, but they are much heavier underneath.

They combine real-time communication, session orchestration, behavioural unpredictability, moderation, and infrastructure pressure into one product. That is why they are harder to build well than they first appear.

The challenge is not making two users talk once.

The challenge is making that experience stable, fast, and safe over and over again.

If you are planning to build a real-time platform, whether it is a random video chat app, a live consultation product, or a niche communication tool, the most important early decision is rarely the interface.

It is the architecture.

If you want to build it properly from the start, Flamincode can help you plan and build a platform designed for real usage, not only for launch.

Frequently Asked Questions

What technology is usually used to build random video chat platforms?

Most modern platforms use WebRTC alongside signalling servers, matchmaking logic, backend session management, and moderation systems.

Is WebRTC enough to build a platform like Omegle?

No. WebRTC handles real-time media, but the full platform still needs matching, signalling, moderation, state management, and infrastructure planning.

Are random video chat platforms expensive to run?

They often become significantly more expensive as usage grows, especially once fallback media infrastructure, moderation, and operational monitoring are added.

What is the hardest part of building a random video chat platform?

In many cases, the hardest part is not video itself. It is building a system that remains reliable and safe under real user behaviour.

Can this type of platform be built as an MVP first?

Yes. In many cases that is the right approach. But an MVP should be treated as a validation version, not a finished production platform.

Admin

Mostafa is a Wordsmith, storyteller, and language artisan weaving narratives and painting vivid imagery across digital landscapes with a spirited pen, he embraces the art of crafting compelling content as a copywriter, and content manager.

Your software dev partner, smooth process, exceptional results

Contacts

contact@flamincode.com.au

© All rights reserved to Flamincode